昨天在豆瓣看到有人发状态问如何把豆瓣日志保存到本地,正好最近在学python,就想到用python写一段小程序,练练手。

解析HTML用的是BeautifulSoup库,看了一下文档,还算简单,但是有些奇怪的问题我一时弄不清楚为什么,所以部分功能是用了比较曲折的方法实现的……

用法:运行这段程序时,将用户名作为参数,如果你没有设置豆瓣的用户名,那就是一串数字,也就是你个人主页的网址里“people”后面那一串,例如(以当初提问的这位同学为例):

~$ python crawl.py duanzhang >> blogbackup.txt

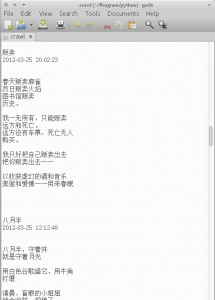

效果如下:

有个功能缺陷是,如果日记里有图片,只能抓到那部分的HTML代码,有兴趣的同学可以继续扩充,但是估计就要想着保存为网页文件了,以便原貌呈现图文混排,当然,也可以仅仅作为备份,把图片抓下来保存在同一个目录里,大家根据自己需求修改吧。

好了,少废话,上代码:

from bs4 import BeautifulSoup

import urllib2

import re

import sys

def get_each_blog(url):

content = urllib2.urlopen(url)

soup = BeautifulSoup(content)

header = soup.find_all("div", attrs={"class": "note-header"})

title = header[0].h1.get_text()

date = header[0].span.get_text()

print(title.encode("UTF-8"))

print(date.encode("UTF-8"))

print('\n')

body = soup.find_all("div", attrs={"class": "note"})

body = str(body[1])

body = body.replace("""<div class="note" id="link-report">""", "")

body = body.replace("</div>", "")

body = body.replace("\n", "")

body = body.replace("<br/>", "\n")

print(body)

print('\n')

print('\n')

def link_list(pageurl):

content = urllib2.urlopen(pageurl)

soup = BeautifulSoup(content)

urllist = soup.find_all("div", attrs={"class": "rr"})

for i in urllist:

blog_url= ('http://www.douban.com/note/'+(i['id'].split('-'))[1])

get_each_blog(blog_url)

baseurl = "http://www.douban.com/people/{0}/notes/".format(sys.argv[1])

content = urllib2.urlopen(baseurl)

soup = BeautifulSoup(content)

link_list(baseurl)

page_list = soup.find_all("link", rel="next")

while page_list != []:

pageurl = page_list[0]['href']

link_list(pageurl)

page_list = BeautifulSoup(urllib2.urlopen(pageurl)).find_all("link", rel="next")

Java党表示用jsoup毫无压力啊,使用类似jquery select的语法来查找元素。不过频繁请求会有验证码,这点比较讨厌。

看到java心里就发怵啊。。。。